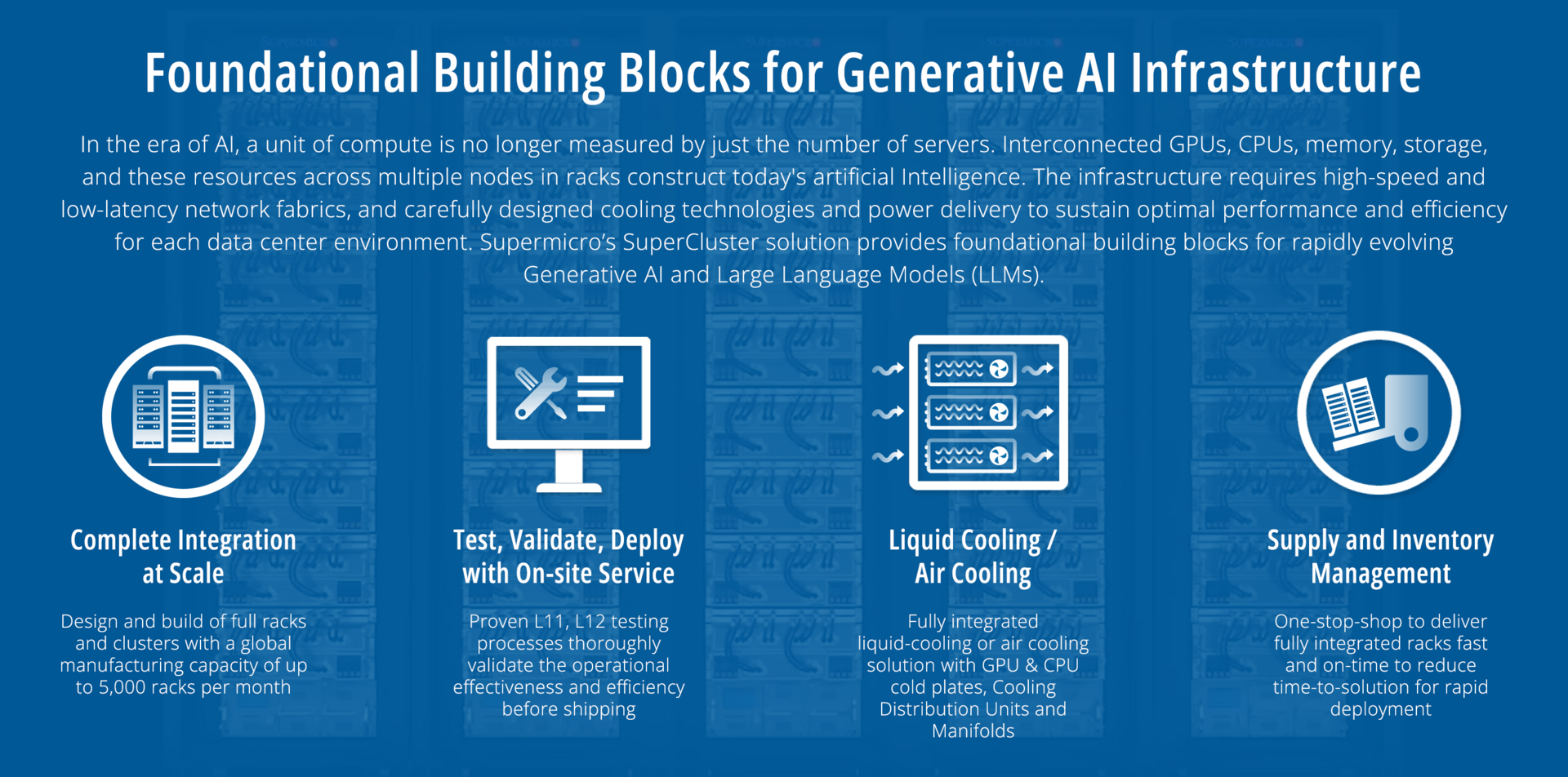

The full turn-key data center solution accelerates time-to-delivery for mission-critical enterprise use cases, and eliminates the complexity of building a large cluster, which previously was achievable only through the intensive design tuning and time-consuming optimization of supercomputing.

Highest compute density

Highest compute density

With 32 NVIDIA HGX H100/H200 8-GPU, 4U Liquid-cooled Systems (256 GPUs) in 5 Racks

- Doubling compute density through Supermicro’s custom liquid-cooling solution with up to 40% reduction in electricity cost for data center

- 256 NVIDIA H100/H200 GPUs in one scalable unit

20TB of HBM3 with H100 or 36TB of HBM3e with H200 in one scalable unit - 1:1 networking to each GPU to enable NVIDIA GPUDirect RDMA and Storage for training large language model with up to trillions of parameters

- Customizable AI data pipeline storage fabric with industry leading parallel file system options

- NVIDIA AI Enterprise Software Ready

Proven design

With 32 NVIDIA HGX H100/H200 8-GPU, 8U Air-cooled Systems (256 GPUs) in 9 Racks

- Proven industry leading architecture for large scale AI infrastructure deployments

- 256 NVIDIA H100/H200 GPUs in one scalable unit

- 20TB of HBM3 with H100 or 36TB of HBM3e with H200 in one scalable unit

- 1:1 networking to each GPU to enable NVIDIA GPUDirect RDMA and Storage for training large language model with up to trillions of parameters

- Customizable AI data pipeline storage fabric with industry leading parallel file system options

- NVIDIA AI Enterprise Software Ready

Cloud-Scale Inference

Cloud-Scale Inference

With 256 NVIDIA GH200 Grace Hopper Superchips, 1U MGX Systems in 9 Racks

- Unified GPU and CPU memory for cloud-scale high volume, low-latency, and high batch size inference

- 1U Air-cooled NVIDIA MGX Systems in 9 Racks, 256 NVIDIA GH200 Grace Hopper Superchips in one scalable unit

- Up to 144GB of HBM3e + 480GB of LPDDR5X, enough capacity to fit a 70B+ parameter model in one node

- 400Gb/s InfiniBand or Ethernet non-blocking networking connected to spine-leaf network fabric

- Customizable AI data pipeline storage fabric with industry leading parallel file system options

- NVIDIA AI Enterprise software ready

Want to learn more?

Do not hesitate to contact our sales team for complete information and support.